Request

The client came to us with a business problem disguised as a product brief. On paper, the ask was straightforward: launch passenger and driver apps, give dispatchers a better control panel, and build a backend that could support serious multi-city growth across Central Asia.

In practice, the mandate was much harder. This was not a company trying to look modern for investors. It was a company trying to compete head-on with the products riders already benchmarked against every day: Yandex Go, inDrive, and Uber. Replacing the operating model in one shot would have created chaos. The platform had to digitize the business while preserving the dispatch workflows, driver habits, and local market knowledge that still made the company viable.

That changed how we approached the project. We were not building a clean-sheet startup app with no legacy burden. We were building a new operational core for a live transportation business.

Context

Competing in Central Asia creates pressure on three fronts at once.

- Passengers expect instant booking, live ETAs, and transparent pricing.

- Drivers expect a steady order flow, simple acceptance mechanics, and earnings visibility.

- The business expects dispatch continuity, partner fleet control, and enough reliability to survive weather spikes, event traffic, and rush-hour concurrency.

The benchmark was not abstract. Riders were already used to the polish and speed of Yandex Go, the price sensitivity of inDrive, and the UX expectations set by Uber. That means a mid-sized platform cannot get away with being merely “good for a local product.” It has to feel credible next to category leaders while still outperforming them in local execution.

The existing business already had street knowledge, driver relationships, and dispatcher experience. What it lacked was a unified digital layer. Orders came from too many channels, status updates were fragmented, and scale depended too heavily on manual coordination.

That is usually the moment when companies overcorrect and try to automate everything at once. We deliberately did the opposite. We designed the system to let old and new workflows coexist, then used architecture to make that coexistence stable.

Strategy

We organized the solution around three principles.

First, the ride lifecycle had to become explicit. Booking, matching, acceptance, pickup, trip execution, and completion could not live as vague status updates spread across clients. They needed to become a strict shared state model understood by every app and service.

Second, real-time infrastructure had to be treated as a core product requirement, not a technical add-on. In transportation, latency is not a cosmetic issue. A late location update means a wrong ETA. A delayed offer means a missed driver. A weak matching flow becomes revenue loss almost immediately.

Third, rollout risk had to stay low. Dispatchers needed to keep taking calls. Drivers needed to stay on the road. Partner fleets needed a controlled onboarding path. That pushed us toward stateless services, modular clients, and clear boundaries between transactional data, volatile real-time state, and background processing.

Operational Insights

Before finalizing the architecture, we mapped the real failure points across the operating model, not just the product surface.

For dispatchers, the problem was fragmented visibility. They needed one live operational picture across app orders, phone bookings, active drivers, and in-progress rides.

For drivers, the biggest friction was response speed. If order offers arrived late, duplicated, or without enough trip context, driver trust in the system would collapse.

For passengers, the critical moment was not registration or profile setup. It was the first booking. The product had to feel instant at the exact moment a user decided to request a ride.

For the business, the hidden issue was scaling behavior. Manual coordination can work in one city and fail completely when a second city or a large partner fleet is added. In Central Asia, that challenge becomes more visible because every new city introduces a slightly different mix of airport demand, commuter traffic, local fleet dynamics, and price elasticity. The backend had to be designed for geographic expansion from day one, even if launch demand was smaller.

Solution

The final solution was a distributed transportation platform with six connected products and a backend designed around event-driven coordination.

On the client side, we delivered passenger and driver apps for iOS and Android, a partner dashboard for fleet operators, and an admin environment where dispatchers and operations teams could manage the live system.

On the backend, we split responsibilities across dedicated services for authentication, orders, notifications, payments, and operational support. Each service owned a clear part of the system instead of competing over the same state.

That separation mattered because almost every “simple” transportation action is actually a multi-step workflow. A booking request touches identity, maps, pricing, order creation, driver search, notification delivery, and live tracking. If those concerns are not separated cleanly, the platform becomes unpredictable under load.

Architecture

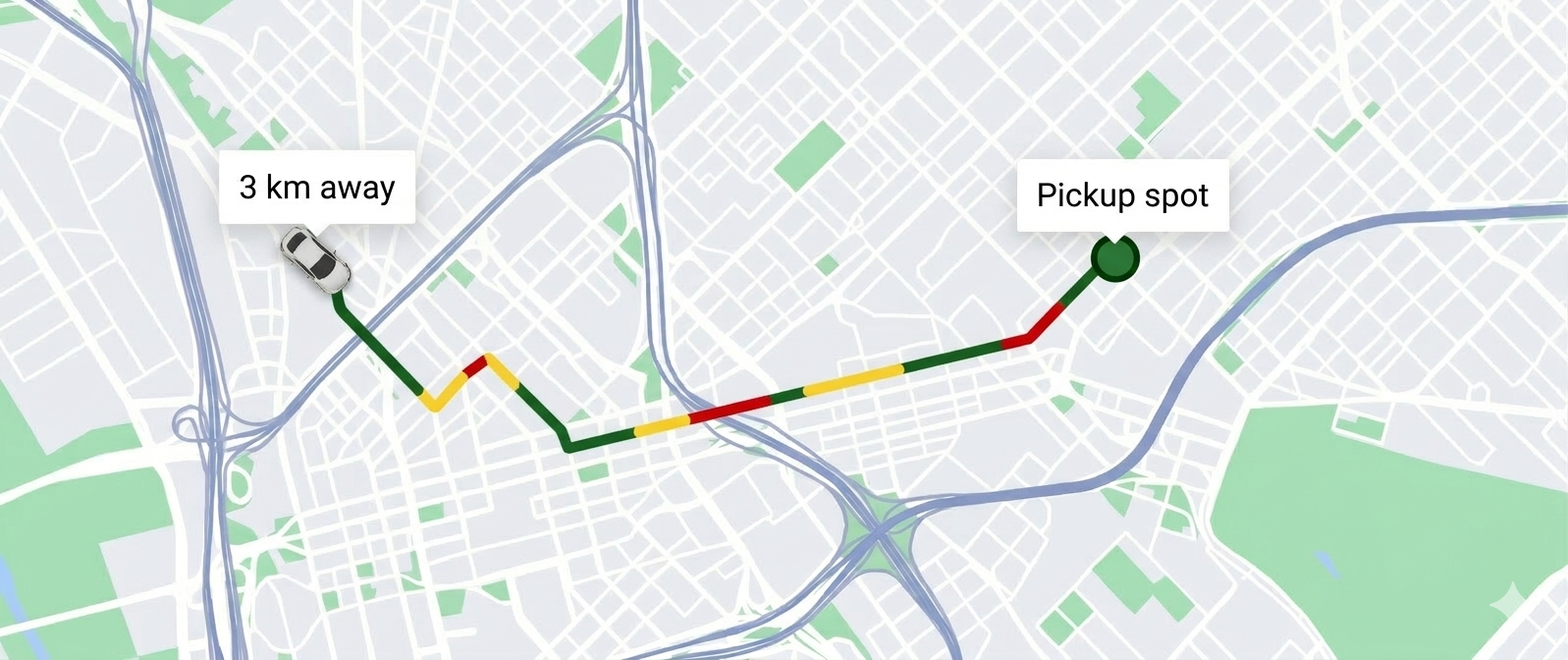

One of the first technical decisions was to treat driver telemetry as a first-class stream. A ride-hailing platform does not have one map. It has many maps updating at once: the passenger view, the dispatcher view, the matching engine, and the partner operations view. Standard request-response patterns are too expensive for that kind of constant movement.

We used EMQX and MQTT as the real-time backbone. Each driver device held a persistent connection and published location updates into dedicated topics. That let the platform distribute updates to the right subscribers without forcing clients to poll continuously.

Booking Logic

What happens before a passenger sees the ride confirmed

This is the real complexity of the product. The platform is not judged by how a ride ends, but by how quickly and reliably it can validate, route, lock, notify, and confirm the booking at the exact moment demand spikes.

Target Response

< 300 ms

from booking tap to initial confirmation

Step 1

Auth and trip validation

The request is authenticated, the pickup is normalized, and the trip data is validated before any expensive work begins.

Step 2

Route and fare estimation

Maps and routing services are queried to estimate price and ETA quickly enough for the booking screen to still feel immediate.

Step 3

Order creation and candidate search

The ride is persisted with a controlled initial state, then the system queries the nearest eligible drivers based on live availability and radius rules.

Step 4

Driver offer and passenger confirmation

The best candidate is reserved, the offer is dispatched through the real-time layer, and the passenger receives confirmation without waiting for the whole fleet search to complete.

Rush-Hour Safeguards

What keeps the flow from breaking under load

Driver locking

Redis locks prevent the same nearby driver from being targeted by overlapping booking waves during rush hour.

Timeout and re-match

If an offer is declined or ignored, the order re-enters matching automatically instead of falling into manual recovery.

Telemetry fan-out

MQTT distributes the same live location stream to matching, dispatch, and passenger tracking without turning the system into an HTTP polling storm.

The ride lifecycle itself was still enforced by the Orders-service as a strict state model, but the real value came from the safeguards around it. Redis locks prevented duplicate assignment attempts, timeout rules pushed unclaimed orders back into matching automatically, and the messaging layer kept status propagation fast enough for drivers, passengers, and dispatchers to trust what they were seeing.

Demand Intelligence Engine

One of the most valuable subsystems sat slightly below the booking flow: a dedicated demand worker that turned local ride activity into a live geographic pressure map of the city.

Most transportation platforms talk about dynamic pricing as if it were a single coefficient. In reality, that approach is too blunt. Demand is not flat, and it does not move evenly. It concentrates around stations, event venues, nightlife districts, rain pockets, airport waves, and commuter corridors. We needed the platform to understand that geography in real time rather than treating the whole city as one noisy average.

This worker used H3 hexagons as the unit of spatial intelligence. Every demand action landed in a specific cell, updated local fill state, recalculated multipliers for the affected tariff, and, if the cell was already saturated, pushed the remainder outward into neighboring rings. That gave the platform something much more useful than a surge toggle. It gave it a controlled model of how pressure spreads.

Demand Engine

A real-time geographic pressure layer for pricing and supply balance

“We did not model demand as a number. We modeled it as pressure moving through the city.”

Spatial memory

Demand was modeled as a geographic field, not as a city-wide counter, so the system could react to pressure exactly where it formed.

Recursive spillover

When a core hexagon hit capacity, the worker propagated the remainder into outer rings instead of dropping information or overstating one point on the map.

Controlled decay

TTL cleanup and multiplier recalculation let hotspots cool down predictably, which kept pricing responsive without turning the map into flickering noise.

The implementation detail that mattered most was stability. When pressure spilled into outer rings, inner cells could be temporarily frozen so the field did not oscillate every few seconds. State lived in Redis, writes were grouped through Lua scripts, and a cleanup worker continuously removed expired actions and recomputed fill values. In effect, this was not just caching. It was a real-time geographic pricing layer sitting underneath the product.

For riders, that meant fares and ETAs felt grounded in local conditions. For drivers, it meant supply signals were clearer. For the business, it meant demand hotspots could be represented, persisted, and decayed in a way that was operationally credible rather than mathematically naive.

The platform architecture below captures how these pieces fit together across mobile apps, dashboards, messaging, data, and infrastructure.

At the data layer, PostgreSQL remained the source of truth for transactional records, while Redis handled volatile state such as availability, session context, and matching locks. This split gave us transactional safety where the business needed integrity and memory-speed operations where the product needed responsiveness.

Delivery Decisions

Several delivery choices had an outsized impact on business stability.

We kept services stateless wherever possible so traffic could be redistributed through AWS load balancing during spikes. That protected the platform during rain events, evening peaks, and local demand bursts without forcing the team to provision every service for worst-case load all year round.

We also kept phone dispatch inside the same operating environment as app bookings. That may sound small, but it was critical. Many transportation businesses fail digital transformation because they create one system for “legacy staff” and another for “new users.” We intentionally built a unified operational view, so dispatchers could work across both channels instead of being bypassed by the product.

On the client side, the driver experience was designed for speed and low cognitive load. Offers had to be clear, answerable in one tap, and backed by reliable navigation and earnings history. In this market, driver retention is partly a UX problem, not only an incentive problem.

Tech Stack

Cloud & Infrastructure

Mobile

Web

Real-time & Messaging

Databases

Maps & Routing

Notifications

Auth & Security

Results

- Multi-city expansion within 12 months without replatforming, which confirmed that the original service boundaries were strong enough for Central Asian market rollout.

- Driver fleet growth reached 3x after launch, supported by smoother onboarding and a more reliable order distribution flow.

- Passenger booking felt materially faster, with booking confirmation designed to stay under the sub-300-millisecond target for the critical first response.

- Dispatcher operations remained intact during digitization, which protected the business from the rollout shock that usually comes with platform replacement.

- Race conditions in driver matching were removed from the normal operating path, reducing the risk of duplicate assignments and lost orders during peak periods.

- The platform moved the client from reactive operations to scalable systems thinking, giving the business room to add pricing logic, analytics, and new service tiers rather than just keeping up with competitors.